Why this matters: Fast "System 1" AI often hallucinates on complex tasks. Understanding deliberative "System 2" architectures is critical for building reliable, agentic workflows.

Attention Activity: The Blind Spot of Fast AI

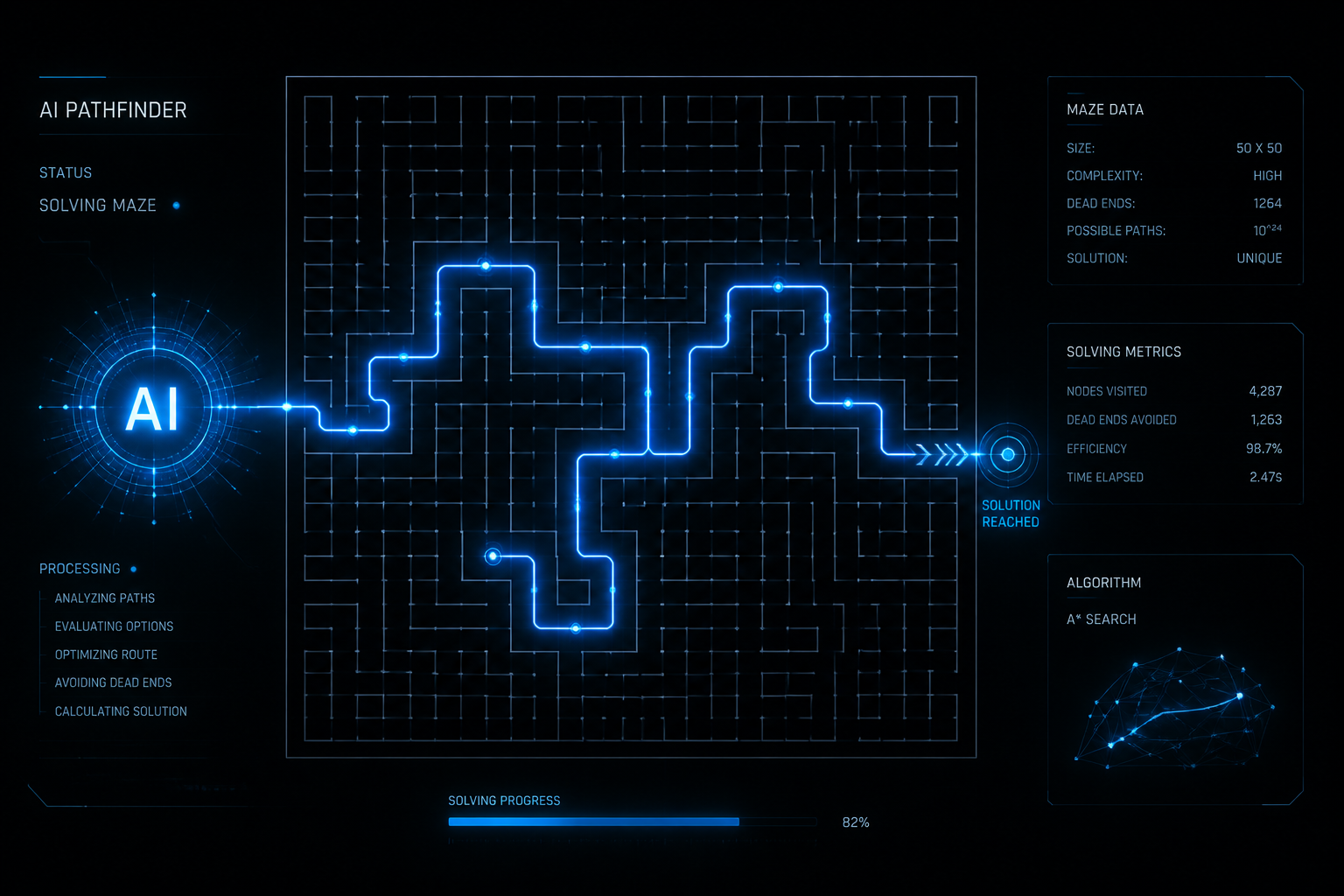

Standard language models generate tokens rapidly, functioning like intuitive "System 1" thinking. This is great for simple chats, but fails at complex logical mazes. Try the experiment below.

Notice: The Fast AI immediately guesses the exit but hits a wall. The Deliberative AI pauses, plans a path, and successfully navigates. This lesson explores the architectures powering that deliberate reasoning.

Chain-of-Thought (CoT) Evolution

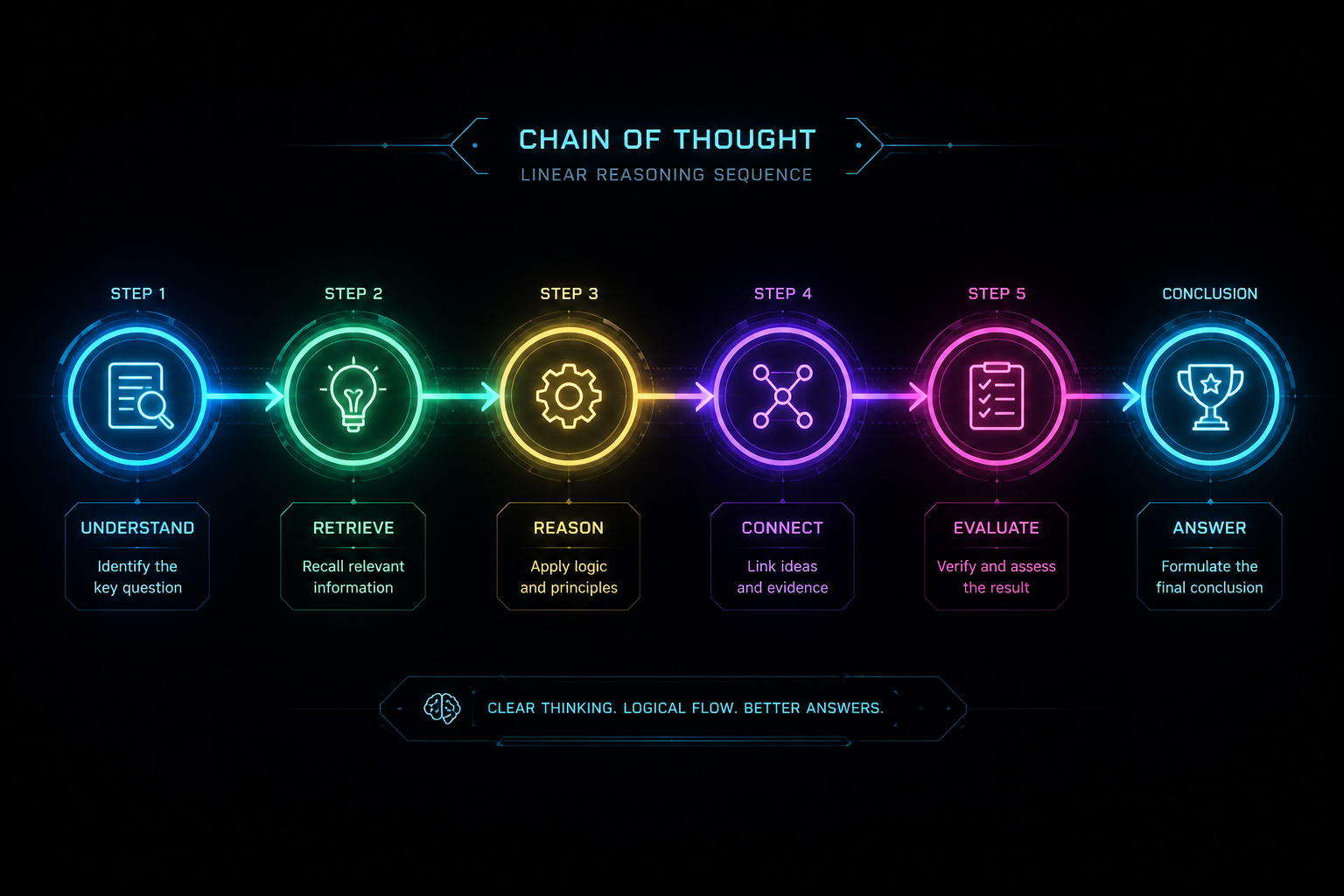

Instead of predicting the final answer directly, Chain-of-Thought (CoT) forces the model to generate a sequence of intermediate reasoning steps. By unrolling the logic, the model effectively gives itself "scratchpad" space to think, significantly reducing logical leaps and hallucinations.

Activity: Unroll the Chain

While powerful, basic CoT is strictly linear. If it makes a mistake in Step 1, the error compounds through the rest of the chain.

Tree-of-Thought (ToT) Reasoning

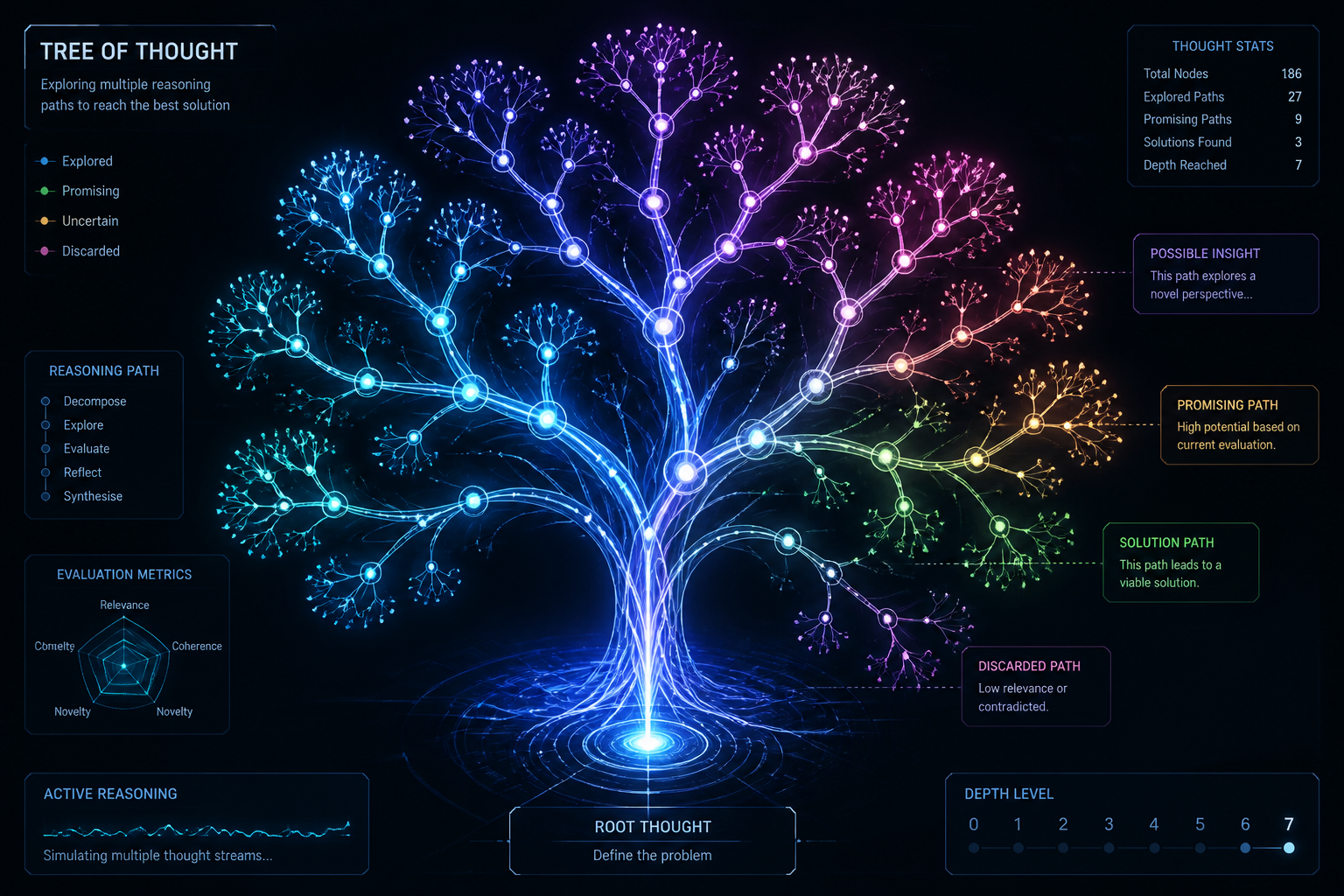

To overcome the linear limitations of CoT, Tree-of-Thought (ToT) architectures allow the AI to explore multiple reasoning paths in parallel. The model branches out, evaluates the viability of each branch, and can backtrack if a path leads to a dead end.

Activity: Explore Branches (Click to evaluate)

Knowledge Check

Why does Tree-of-Thought (ToT) handle complex logic puzzles better than basic Chain-of-Thought (CoT)?

Self-Reflection Mechanisms

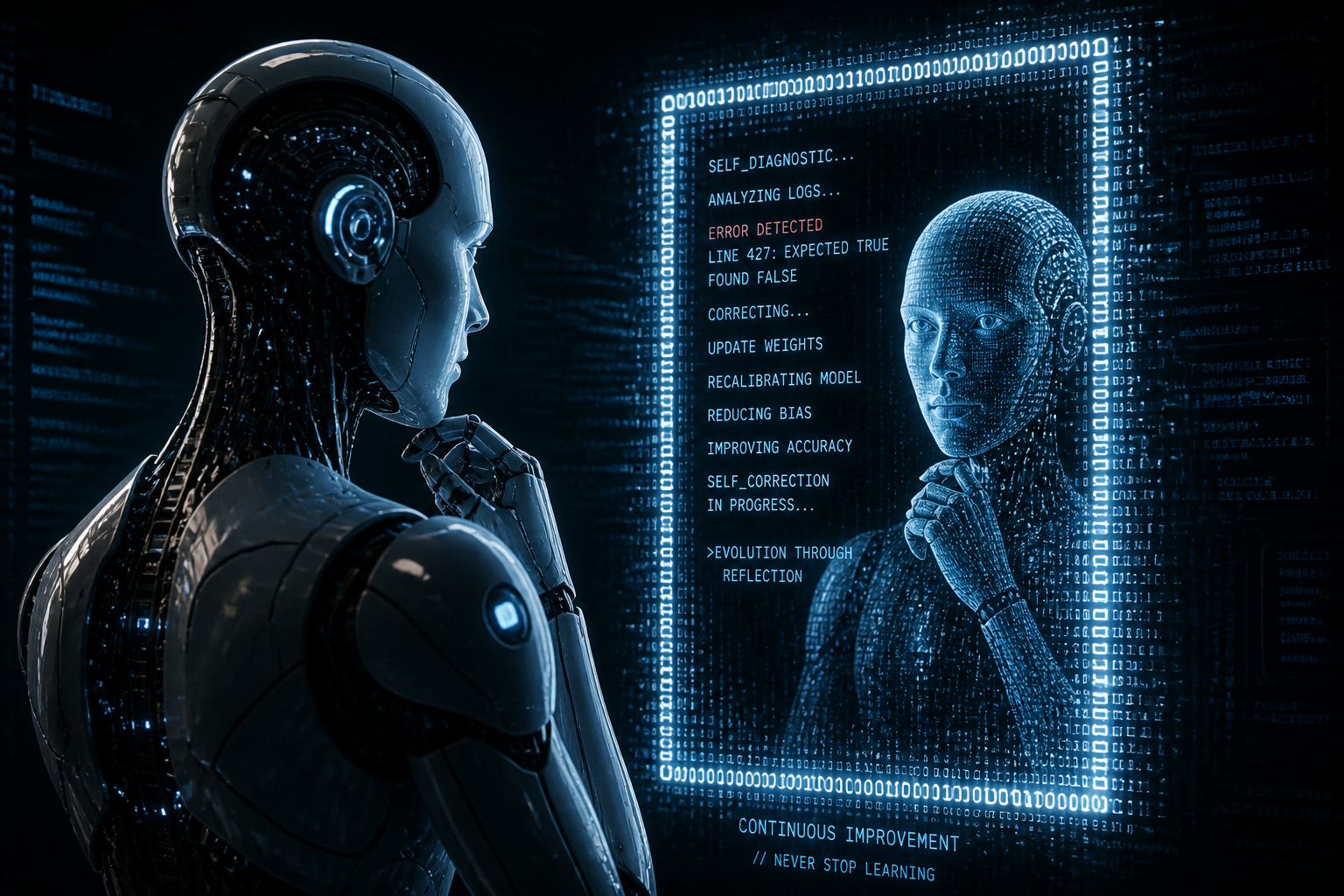

How does an AI know if a branch in a Tree-of-Thought is bad? Self-reflection. In this architecture, the model (or a secondary evaluator model) reviews its own outputs against constraints before proceeding. If an error is detected, it generates a critique and attempts a correction.

Activity: The Critique Loop

The model drafted a response but detected a logic flaw. Click the flawed node to initiate self-reflection.

Click to critique and refine.

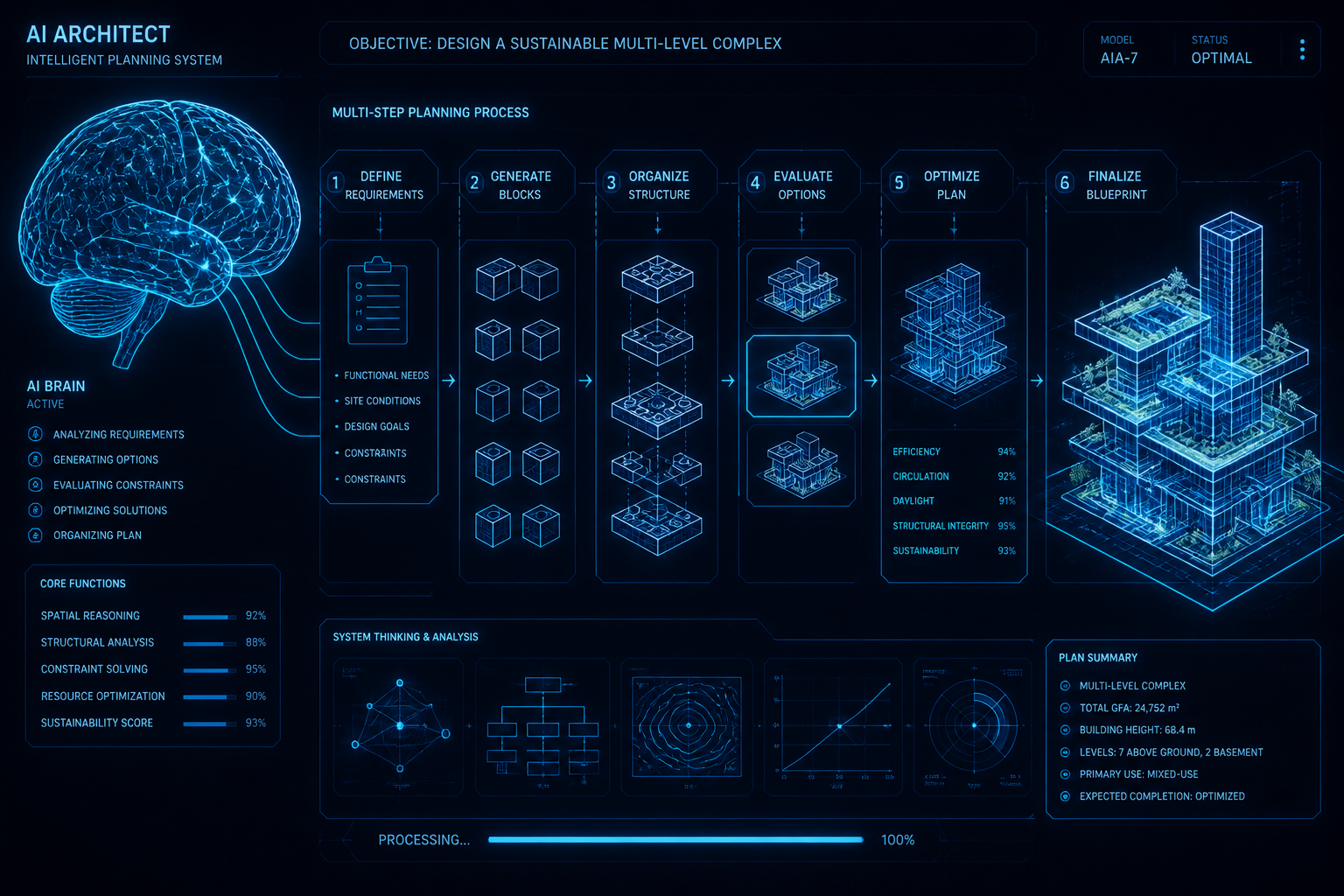

Deliberative Inference & Multi-Step Planning

Combining CoT, ToT, and reflection creates Deliberative Inference. Used by advanced agentic workflows, the AI acts as an architect. It breaks a massive goal into a multi-step plan, executes tasks sequentially, and verifies each layer before building the next.

Activity: Architect the Plan

This approach trades speed and compute cost for massive gains in reliability and complex problem-solving capabilities.

Knowledge Check

What is a likely outcome when an AI architecture relies on linear Chain-of-Thought but lacks self-reflection during a multi-step task?

Assessment Begins

You have completed the tutorial portion of this protocol. In the following section, you will be tested on your knowledge of reasoning-centric AI architectures, including Chain-of-Thought, Tree-of-Thought, and deliberative mechanisms.

There are 5 questions. You must score 80% or higher to earn your certificate. Good luck!

Key Takeaways

- Review the core ideas.

- Connect concepts to practice.

- Prepare for assessment.

Assessment Question 1

You are designing an AI agent to solve a complex scheduling puzzle where many constraints overlap. Which architecture is best suited for exploring multiple parallel solutions and evaluating them before outputting the final schedule?

Assessment Question 2

An AI agent creates a deliberative plan but fails repeatedly because it blindly starts Step 3 even if Step 2 produced a formatted error. What specific mechanism is missing from this agent's architecture?

Assessment Question 3

In deliberative inference architectures, what is the primary operational trade-off when using extensive multi-step planning and reflection compared to standard fast response generation?

Assessment Question 4

Which concept best describes a "Chain-of-Thought" (CoT) process in modern LLMs?

Assessment Question 5

If an agent uses a "ReAct" (Reasoning + Acting) loop, what is its typical behavior cycle when solving a multi-step task?

Lesson Complete

Final Score: